Photography & Imaging (π) Research Group · Nankai University

We develop algorithms to fundamentally improve image and video quality in degraded conditions. Our work spans a wide spectrum of restoration tasks — from super-resolution and denoising to low-light enhancement, underwater image restoration, and all-in-one degradation removal — with a focus on real-world robustness and perceptual fidelity.

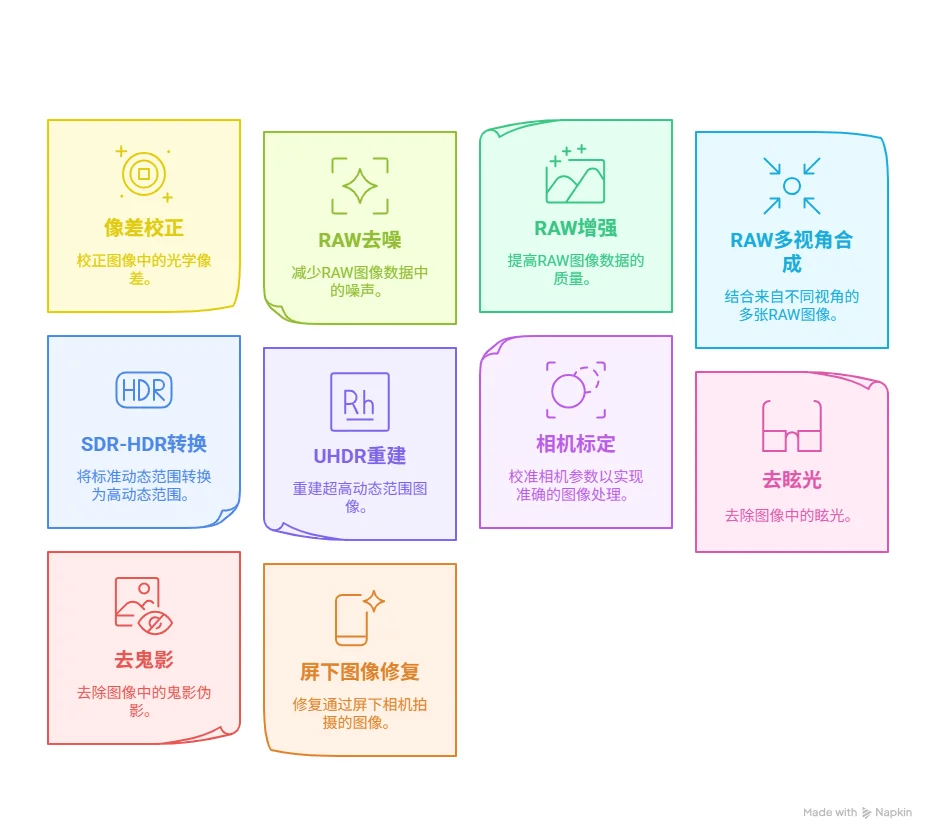

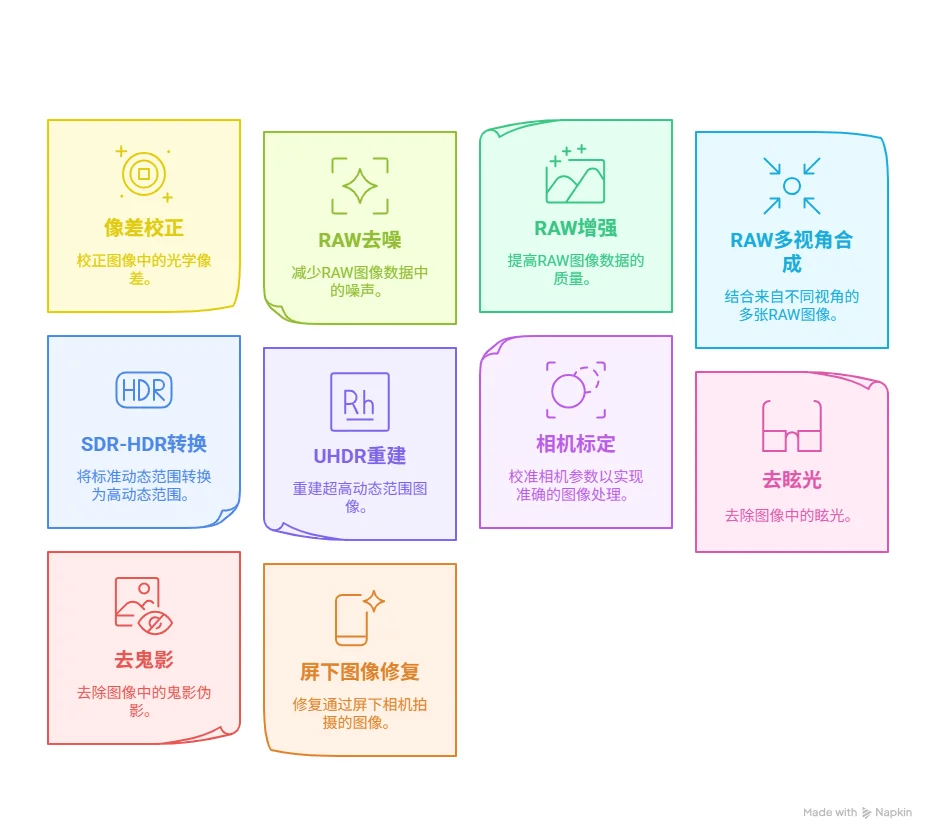

We push the boundaries of camera systems through computational methods. Our research addresses the full imaging pipeline — from RAW sensor data processing and aberration correction to HDR reconstruction and under-display restoration — enabling higher-quality visual capture across diverse hardware platforms and challenging environments.

We explore generative models for creative visual content production and precise editing. Our research covers identity-preserving video generation, high-resolution video synthesis, semantic image retouching, and efficient diffusion-based editing — bridging the gap between creative intent and photorealistic output.

We leverage the power of large language and vision-language models to advance image quality assessment, AI-generated content detection, and intelligent visual agents. Our work connects foundation model capabilities with practical imaging applications, enabling smarter, more automated visual workflows.

We tackle high-level visual perception in challenging environments, with a particular focus on underwater scenes. Our research covers object detection, salient object detection, camouflage detection, and instance segmentation — advancing the ability of AI systems to understand complex, real-world visual scenes.

We explore 3D scene representation, reconstruction, and understanding. Our research spans 3D Gaussian Splatting for novel-view synthesis and scene reconstruction, depth super-resolution, point cloud robustness, and neural rendering — enabling high-fidelity 3D visual understanding in diverse and challenging scenarios.